Who Gets the Shield

YouTube is expanding its likeness-detection system to a pilot group of government officials, political candidates, and journalists, the company announced Wednesday. Participants must upload a government ID and a video selfie to enroll. YouTube declined to disclose the full participant list. When asked specifically whether President Trump is included, the company did not confirm his participation. Once enrolled, members can review videos the system flags for using their face and file privacy complaints to request removal.

How the Scan Works

The software scans every new upload for faces that match enrolled users. When a match surfaces, the affected person receives an alert and can watch the flagged clip inside a private dashboard. If they believe the video is an unauthorized impersonation, they can submit a takedown request. YouTube stresses that parody and satire remain protected, so a request does not guarantee removal. The platform's human reviewers weigh each complaint against those free-expression rules.

Why Creators Rarely Hit Delete

Since the tool opened to all YouTube creators last year, only a trickle of videos have been challenged. "Most of it turns out to be fairly benign or additive to their overall business," said Amjad Hanif, vice president of creator products. The company sees the low dispute rate as evidence that much AI-generated likeness use is promotional rather than malicious. Hanif added that YouTube is now exploring whether to let rights-holders claim ad revenue from videos that feature their face, mirroring the platform's existing Content ID system for music and video clips.

From Hollywood to the Hill

YouTube began building the detector in 2024 with help from Creative Artists Agency and tested it with high-profile creators such as MrBeast and Marques Brownlee. The upcoming political expansion will eventually cover "any government official, political candidate and journalist," Leslie Miller, vice president of government affairs and public policy, told reporters. CEO Neal Mohan listed "AI transparency and protections" among his top 2026 priorities, including mandatory labels for AI-generated content and faster removal of harmful synthetic media.

The Voice Frontier

Facial likeness is only the first step. Hanif confirmed that engineers are now training the system to detect voice impersonations, a feature not yet ready for release. The extension to audio could open the door for radio hosts, podcasters, and voice-over artists to gain the same alert-and-review rights already granted to users whose faces are cloned.

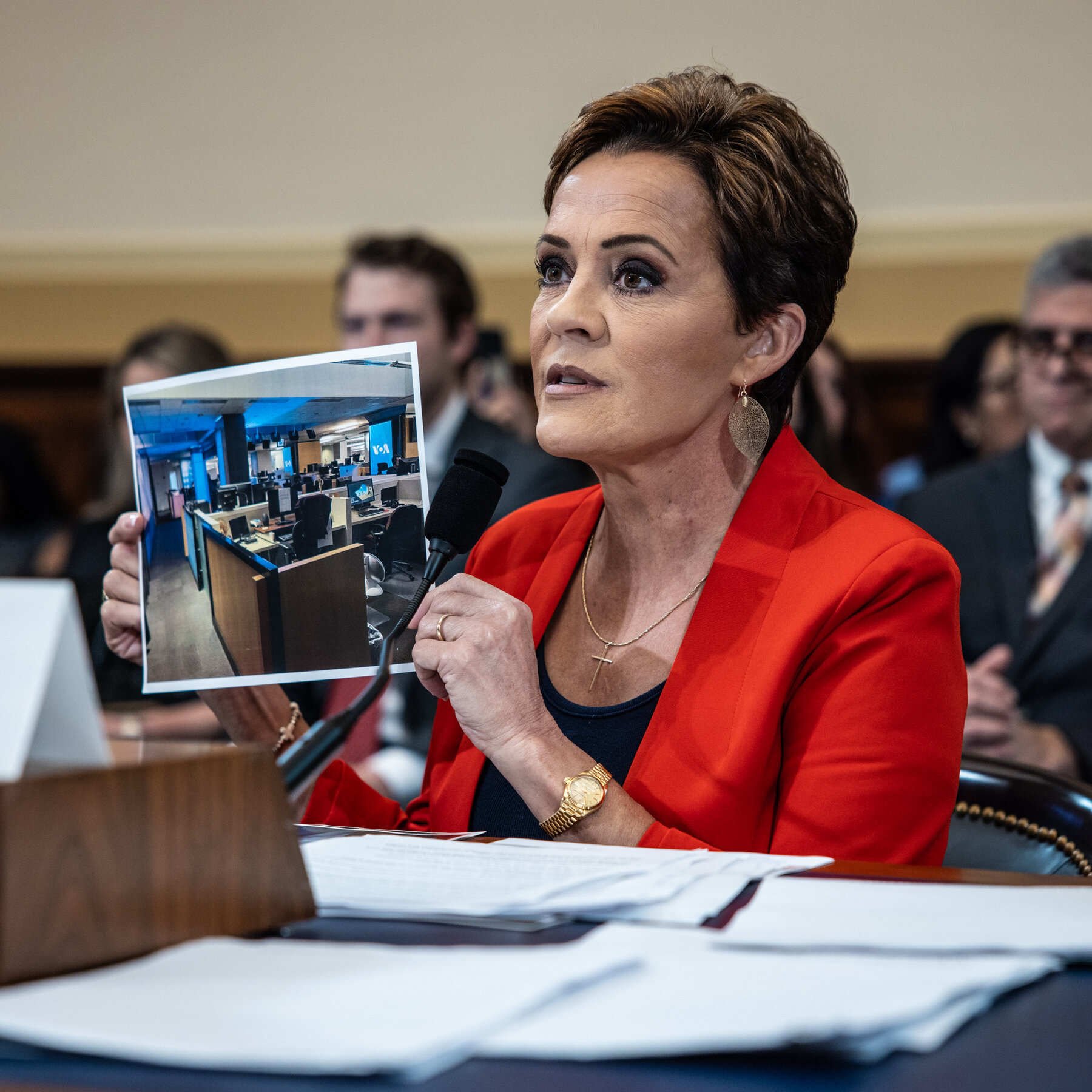

The Legislative Shadow

Congress and the Trump administration have both singled out deepfakes as a policy target. Trump signed the TAKE IT DOWN Act last year, targeting non-consensual intimate imagery, including AI fakes. Broader federal legislation has stalled, and Miller noted that YouTube has endorsed the NO FAKES Act, which would require platforms to act quickly on takedown requests for synthetic likenesses. "We think that bill provides a critical blueprint across the U.S. and worldwide to ensure that technology always serves human creativity, not the other way around," she said.